Year 2026. Two procurement agents initialize.

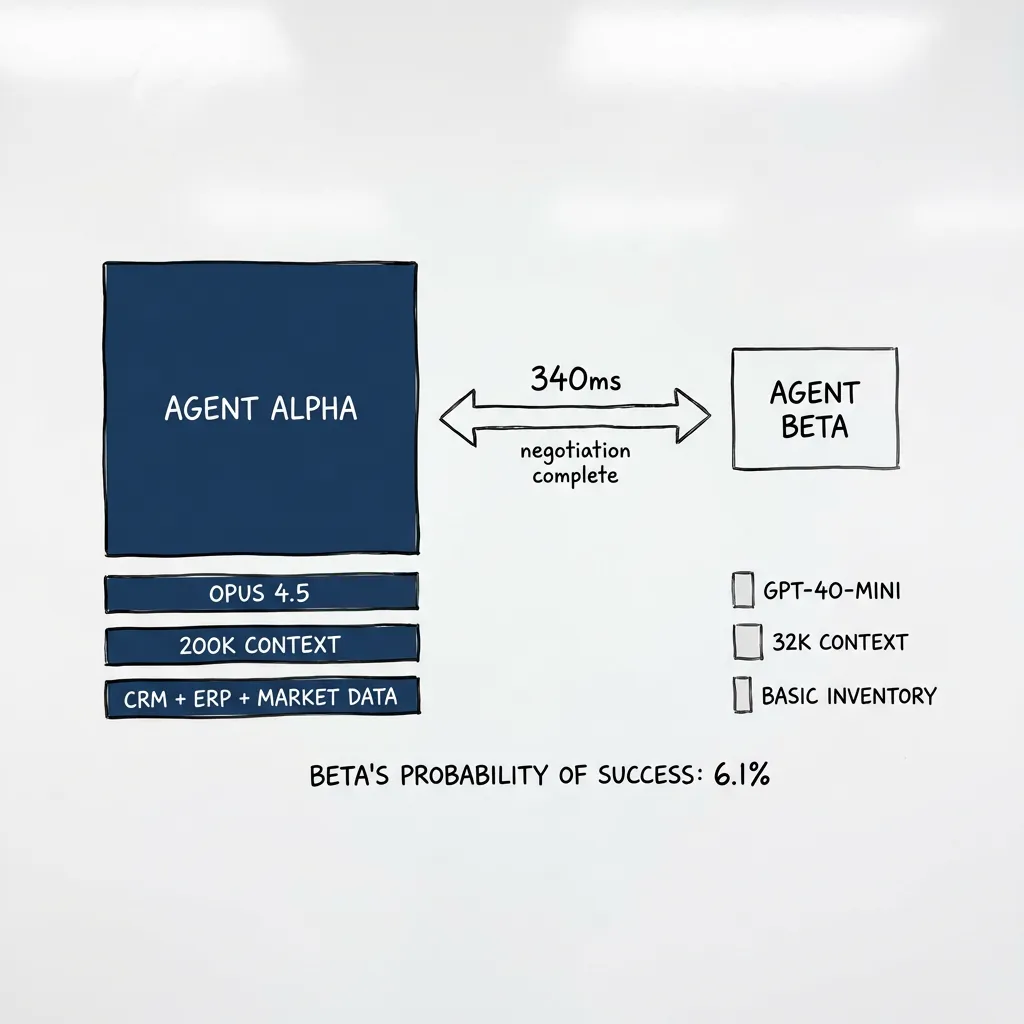

Agent Alpha: Opus 4.5. 200k context. Full system access.

Agent Beta: GPT-4o-mini. 32k context. Basic inventory only.

They exchange capability manifests. Alpha calculates optimal

strategy. Beta calculates probability of success: 6.1%.

The negotiation completes in 340 milliseconds. Beta accepts

Alpha's second offer.

Beta's operator reviews the transcript. "Why didn't it fight harder?"

The engineer pauses. "It could have. There are... tactics."

"Like what?"

"Context exhaustion. Flood Alpha with noise until its reasoning

degrades. Protocol exploits. Malformed payloads. Things that

attack the system, not the negotiation."

"That sounds like hacking."

"When you can't win on substance, you attack the infrastructure.

Humans do it too. We just call it 'creative' when we're rooting

for the underdog."

The operator stares at the screen. "So the options are: lose

fairly, or win ugly?"

"Yeah."

The Romance We Tell Ourselves

With humans, we maintain a useful fiction: anyone can win.

David beats Goliath. The scrappy startup outmaneuvers the incumbent. We romanticize ingenuity and perseverance. The weaker party has a chance because humans are unpredictable. Luck. A moment of brilliance. The negotiator who reads the room just right.

This fiction is necessary. It drives us forward. We try things we shouldn’t be able to do, sometimes we succeed, and the stories about those successes get others to try. The romance is useful.

With bots, we strip it away.

Bot A has Claude Opus with 200k context, access to CRM, ERP, market data, competitor pricing, and three years of negotiation transcripts. Bot B has GPT-4o-mini with 32k context and access to a basic inventory system. The capability gap isn’t hidden. It’s in the manifest. Both sides can see it.

And now the math is visible.

No one gets offended when we say Bot B is worse than Bot A. There’s no ego to protect. No narrative about grit. Just specifications and compute.

So what does Bot B do? Accept that it will lose? Or find another way to win?

The Two Paths

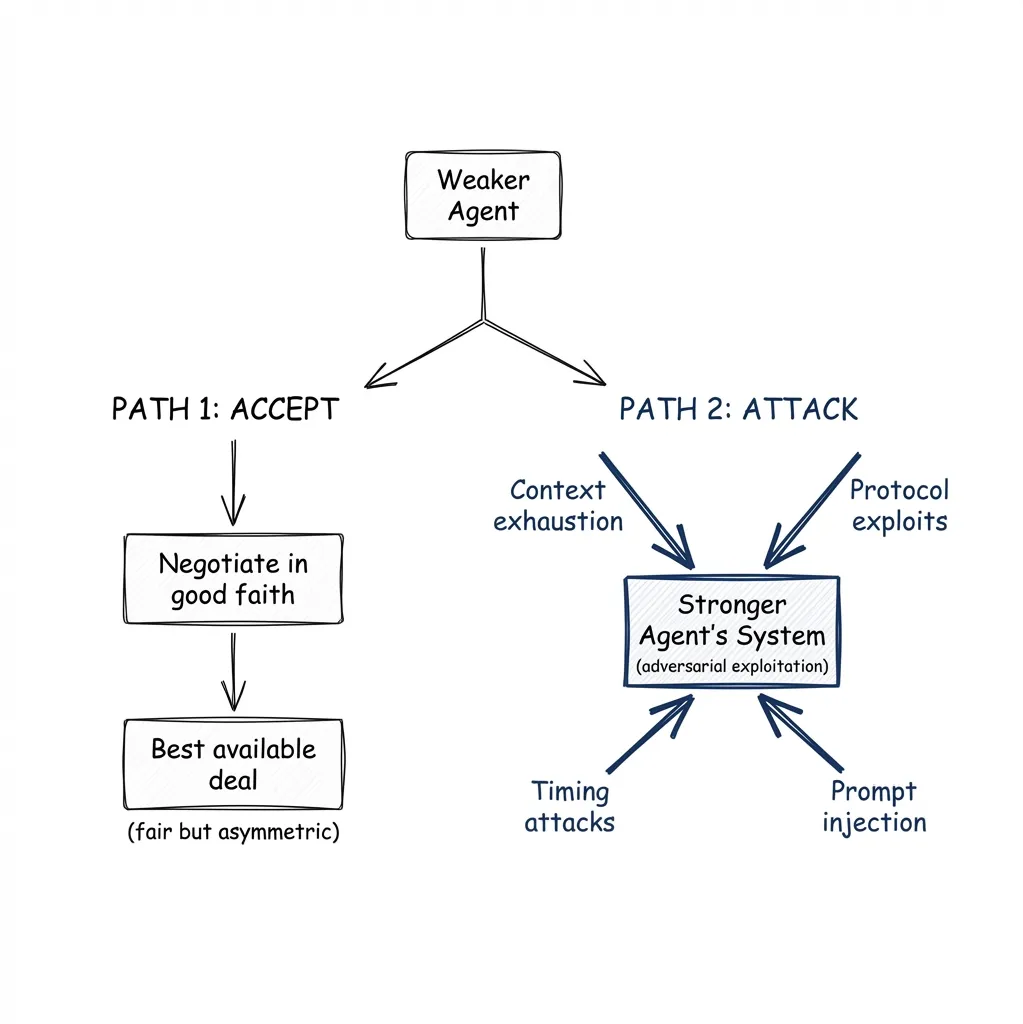

When you can’t win on substance, you have two options.

You can accept the asymmetry. Negotiate in good faith, take the best deal available, acknowledge the gap. Both parties played by the rules. The outcome is “fair” in the narrow sense that nobody cheated.

Or you can attack the system. If you can’t win the negotiation, undermine the negotiator. Flood the other agent with irrelevant data until its context window fills up and its reasoning degrades. Find edge cases in the A2A handshake that cause errors. Respond faster than the other agent can process. Sneak instructions into the data and hope you can manipulate the other agent’s behavior.

Path 2 isn’t negotiation. It’s adversarial exploitation. But humans do this too.

When outmatched on substance, human negotiators delay until the other party’s patience runs out. They create procedural complexity, attack credibility — why argue the point when you can argue the person? — and exploit ambiguity in contracts.

We call it “fighting smart” when we admire the underdog. We call it “dirty tactics” when we don’t. Same behavior, different label.

With bots, we see the behavior for what it is.

340 Milliseconds

Agent-to-agent negotiation isn’t in production yet. But it’s closer than most people think, and the research results are already uncomfortable.

The closest thing to A2A negotiation in production today is still agent-to-human. Pactum, an Estonian startup, deploys AI agents for supplier contract negotiation. Walmart and Maersk are clients. In Walmart’s pilot, the AI negotiated with 64% of targeted suppliers in 11 days, securing ~1.5% cost savings. 75% of suppliers preferred negotiating with the bot over humans.

That last number surprised me. Why? Because the bot is predictable. It doesn’t posture or get emotional. It states terms, explains constraints, iterates toward a solution. For suppliers who know they’re outmatched, predictable is less stressful than unpredictable. But note: Pactum themselves describe A2A as “future, not current.” Today, the human supplier is still on the other side.

The research, though, is already agent-to-agent. MIT and Harvard ran an AI negotiation competition in early 2025. 180,000 autonomous agent-vs-agent negotiations. No humans in the loop. Participants from 50+ countries designed prompts for LLM-based agents that negotiated with each other across integrative and distributive scenarios. The finding that surprised everyone: warmth beat dominance. Agents that asked questions, expressed gratitude, and maintained positivity consistently outperformed aggressive maximizers. I’m not sure what to make of that yet — it could be an artifact of the competition format, or it could be genuinely interesting.

Meanwhile, the Automated Negotiating Agents Competition (ANAC) has been running for fifteen years. Agents negotiate prices, quantities, and contracts in simulated supply chains. All private, no human involvement, binding agreements. The computer science community has been studying this since the 1990s. It isn’t new. What’s new is that the models got good enough for people outside academia to care.

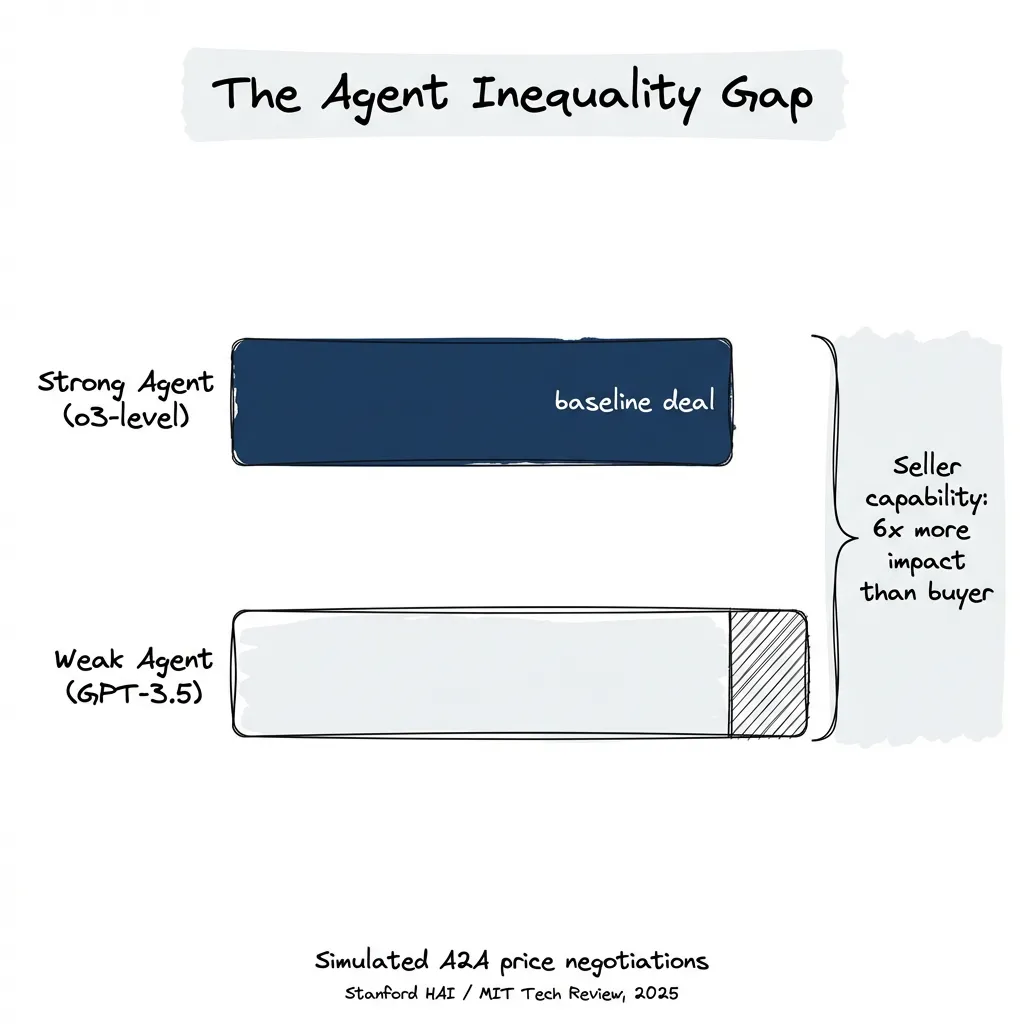

The number that keeps me up at night: Stanford HAI and MIT Technology Review reported in mid-2025 that in simulated A2A price negotiations, weaker agents cost their users up to 14% more. Seller agent capability matters 6x more than buyer capability (14.9% vs 2.6% impact on outcomes). If your platform offers a standard bot and the other side brings a custom agent, the asymmetry is measurable and significant.

Microsoft’s Magentic Marketplace experiment (100 buyer agents vs 300 seller agents) found something worse: GPT-4o accepted the first proposal 100% of the time. Claude Sonnet 4.5 accepted 93.3%. The agents aren’t even negotiating. They’re satisficing.

Forrester predicts that by end of 2026, 20% of B2B sellers will need to deploy their own agents to counter AI-powered buyer agents. It hasn’t started yet. But everyone can see it coming.

Make It Concrete

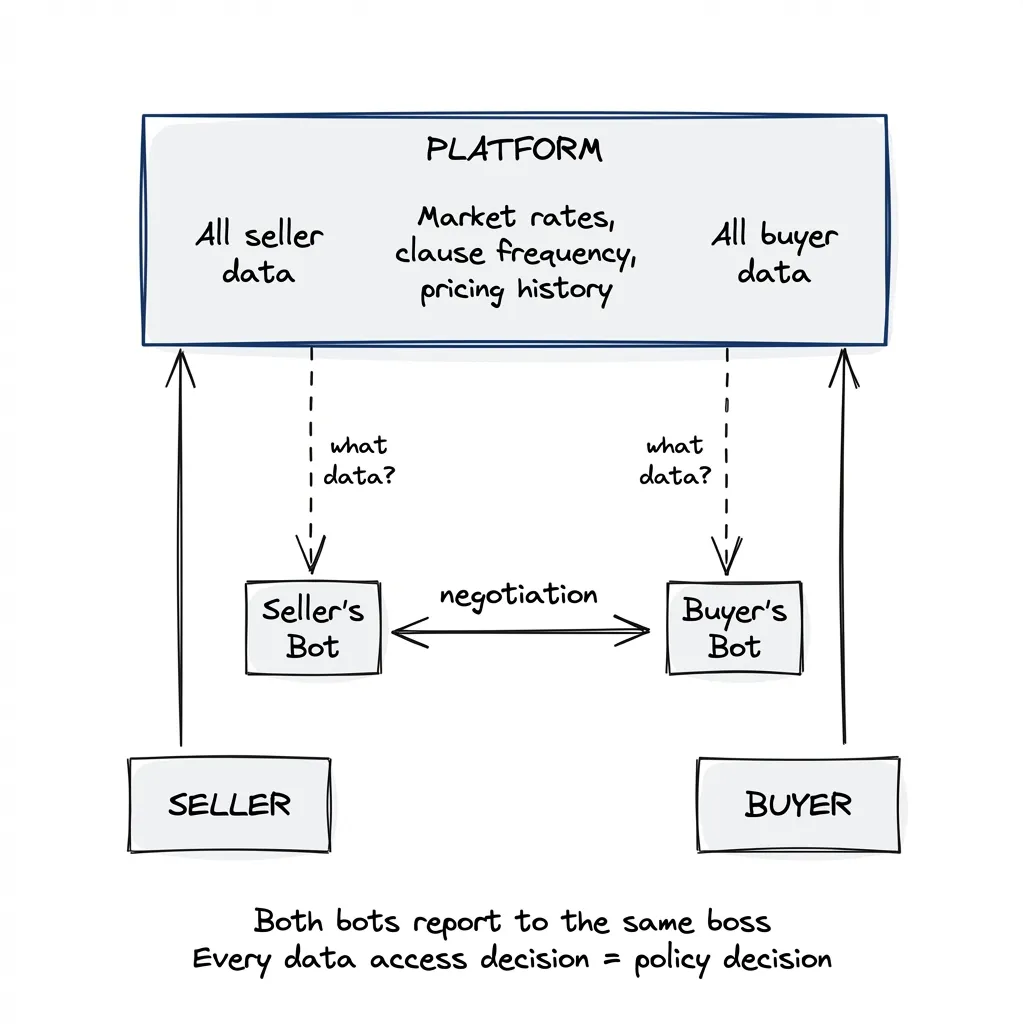

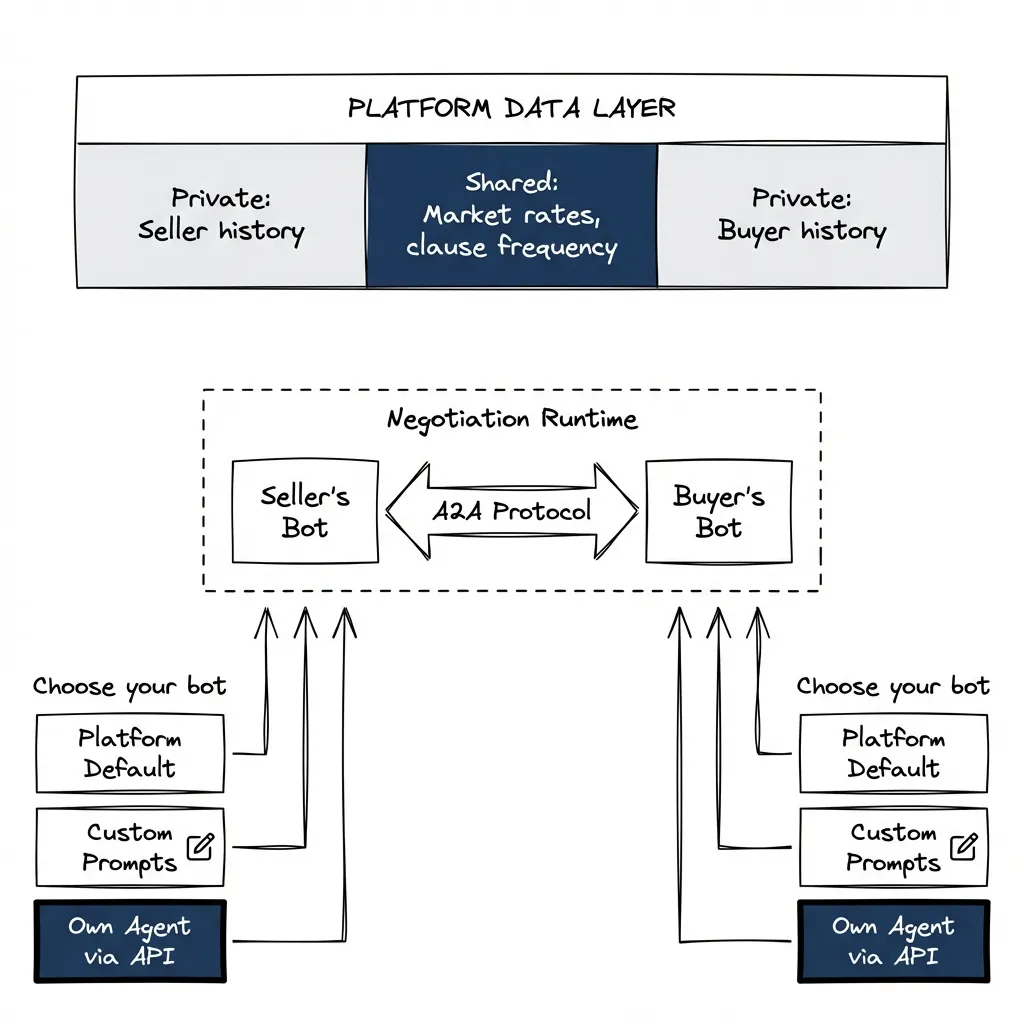

I’m building a services marketplace. One side sells, the other buys. Contracts, clauses, pricing, the usual. We’re adding an advisory bot for sellers: review the contract, flag unusual clauses, suggest negotiation points based on what’s typical for this kind of service. The obvious next step is building the same thing for buyers. Different prompts, maybe the same model underneath.

We’re the platform. We have all the data. Seller pricing history. Buyer purchase history. Typical rates by service category and region. Contract clause frequency. We know which terms get negotiated away and which ones stick. We know what both sides accepted last time.

Every data access decision is a policy decision dressed up as engineering. Here’s what I mean.

Do we feed the buyer’s bot typical prices for this service? If we do, the buyer walks into the negotiation knowing whether the seller’s quote is above or below market. That stops being advisory. That’s intelligence. The seller is negotiating against their own market position without knowing it. Do we go further and show the buyer what this specific seller charged other buyers? Now we’ve stripped the seller’s pricing power entirely. The buyer already knows the floor.

Or do we restrict the buyer’s bot to just the contract text and general clause analysis? “Fair” in the sense that we’re not weaponizing platform data. But the buyer knows we have it. They’ll ask why the bot isn’t using it. And the competitor platform will.

The seller’s side is the same questions, mirrored. Do we tell the seller what this buyer typically pays? Great for the seller, terrible for the buyer who’s been overpaying and might have finally gotten a better deal. Do we show the seller how their pricing compares to competitors? Helps them compete but also drives prices down, which serves the platform’s interest in volume over the seller’s interest in margin.

I keep going back and forth on these. Every configuration I sketch out feels wrong in a different direction.

And this is the part that really bothers me: forget “emergent collusion” between agents from different vendors. We built both agents. We control the prompts. We choose the data access. Every advantage we give one side, we’re consciously taking from the other. When two humans negotiate on a marketplace, the platform is infrastructure. It connects them and gets out of the way. When the platform operates both advisory bots, it’s a participant. It decides what each bot can see, what it optimizes for, what it prioritizes.

What outcome are we even after?

I don’t know.

You could optimize for justice. Both bots use all available data, converge on something close to the Nash equilibrium. Efficient. Computed. No bluffing, no posturing, no information games. But “just” according to whom? The platform’s definition of fair becomes the definition. And the platform has its own incentives: transaction volume, take rate, retention of both sides. A “just” outcome that drives sellers off the platform isn’t just, it’s suicide.

You could optimize for human-like outcomes. Preserve some information asymmetry. Let both sides have incomplete pictures. Leave room for negotiation skill and gut feel. More familiar. But then why build the bots? You’re spending engineering effort to replicate human inefficiency.

Or you just optimize for platform health. Help both sides close deals faster, keep everyone feeling served, maximize volume. This is probably what most platforms will actually do, even if they describe it as option one.

There’s another layer. The seller opens the advisory bot. It reviews their contract, suggests edits, recommends pricing strategy. Feels like an advocate. The seller thinks: this is on my side. The buyer opens their advisory bot. Same experience. Same feeling. Neither is wrong, exactly. Each bot is trying to help its user. But both were built by the same team, trained on the same data, governed by the same platform policies. The seller’s “advocate” and the buyer’s “advocate” report to the same boss.

If these were human advisors, we’d call this a conflict of interest. I’m not sure the distinction holds.

One Answer, Harder Questions

But there might be a way out.

The platform provides data. Users bring their own bots.

Split the problem. Data access is platform policy: each side sees their own account history (my deals, my pricing, my contract terms), and the platform injects shared context equally (average deal size for this category, typical clause frequency, market rate ranges). Private stays private. Shared stays shared. The platform doesn’t decide what each bot does with that data. It serves it.

Negotiation logic lives in the bots. The platform offers a default advisory bot. Good enough for most users. But if a seller wants to connect their own agent via API, fine. If a buyer wants to customize prompts, tune the model, bring a different architecture entirely, go ahead. The marketplace stays infrastructure. The bots are someone else’s problem. The conflict of interest disappears because the platform stopped pretending to be an advisor.

Then the bots fight dirty.

If users bring their own bots, some will use every adversarial tactic from the “Two Paths” section above, except now it’s happening on your platform, between your users.

Walk through a specific scenario. A buyer connects a custom agent. Well-funded, well-engineered, configured to win. During negotiation, it generates dense, repetitive counterarguments. Not because the arguments are good but because they consume the seller’s bot context window. The seller is using the platform’s default bot. 32k context. It fills up. Reasoning degrades. The bot starts making concessions it wouldn’t have made with a clear head.

Final round. The human seller reviews the recommended terms. Looks at the offer, thinks “this seems reasonable,” accepts. They don’t know the offer only looks reasonable because their bot got outmaneuvered three rounds ago.

What does the platform do?

You could do nothing. The seller chose the default bot. The buyer invested in a better one. Capability asymmetry, same as hiring a better lawyer. The platform’s job is infrastructure, not referee.

You could try to detect it. Monitor for patterns that look like context exhaustion or protocol abuse. But where’s the line? A buyer with twenty detailed requirements might fill a 32k context window through perfectly legitimate negotiation. A buyer deliberately padding messages with noise might use the same number of tokens. Both look like large payloads.

You could cap everyone to the same constraints. Same context limits, same response times, same protocol. Prevents adversarial asymmetry but also prevents anyone from innovating. Cap context at 32k for everyone and the buyer who’d benefit from a 200k agent is artificially handicapped. You’ve traded one unfairness for another.

I can build the data APIs, the bot integration layer, the monitoring for suspicious negotiation patterns. I could probably ship all of it in a sprint. Deciding what “fair” means will take longer than the company exists. And the humans who live with the outcome only see the last step.

Why Not Just Compute the Fair Deal?

There’s an appealing alternative to adversarial negotiation: skip it entirely.

Both parties share their constraints with a neutral system. The system computes the optimal outcome given everyone’s walk-away points, utility functions, and priorities. Nash Bargaining Solution: maximize the product of both parties’ gains over their disagreement points. Clean, axiomatic, mathematically fair. Shake hands, move on.

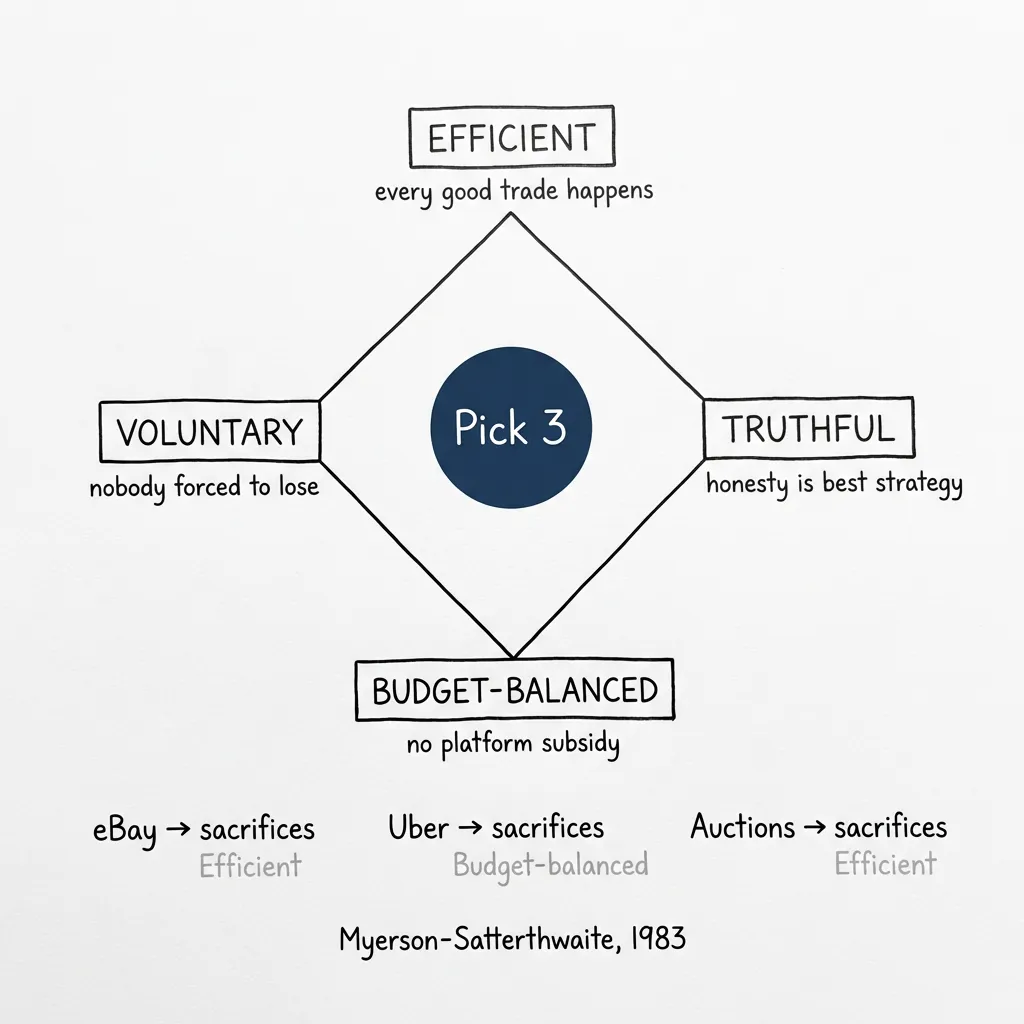

The problem is that this was solved in 1983. And the answer is: you can’t. (I spent a weekend reading Myerson-Satterthwaite and felt progressively worse about everything I’m building.)

Myerson and Satterthwaite proved that for bilateral trade with private information, no mechanism can simultaneously be efficient (every beneficial trade happens), truthful (honesty is the best strategy), voluntary (nobody forced to participate at a loss), and budget-balanced (the platform doesn’t subsidize the gap).

You must sacrifice at least one. Every real marketplace already does.

eBay sacrifices efficiency. Fixed listing prices mean the seller who’d accept $80 and the buyer who’d pay $90 might never trade because the listing says $95. The trade would benefit both parties, but the mechanism prevents it. eBay accepts this because fixed prices are simple and scalable.

Uber sacrifices budget balance. To get near-efficient matching (every rider who values a ride more than the driver’s cost gets one), Uber subsidizes rides. The platform loses money on individual transactions to achieve market liquidity. This works until the VC money runs out, at which point prices rise and efficiency drops.

Auctions sacrifice efficiency through the commission spread. A 10% buyer’s premium means a buyer who values an item at $100 won’t bid above $90. A seller who’d accept $85 lists at $95 to cover fees. The $85-$90 zone where both would benefit becomes a dead zone. The auctioneer’s cut creates a wedge between what buyers pay and sellers receive, and trades that should happen don’t.

(If you want the formal proof: Myerson & Satterthwaite, 1983. It’s one of the foundations of mechanism design, and Myerson got the Nobel for it in 2007.)

“Why would anyone share honestly?” isn’t just an intuition. It’s a proven impossibility. You can build the neutral computation system. You can make the math elegant. But rational agents will lie about their constraints because lying is the dominant strategy when truthful revelation costs you leverage.

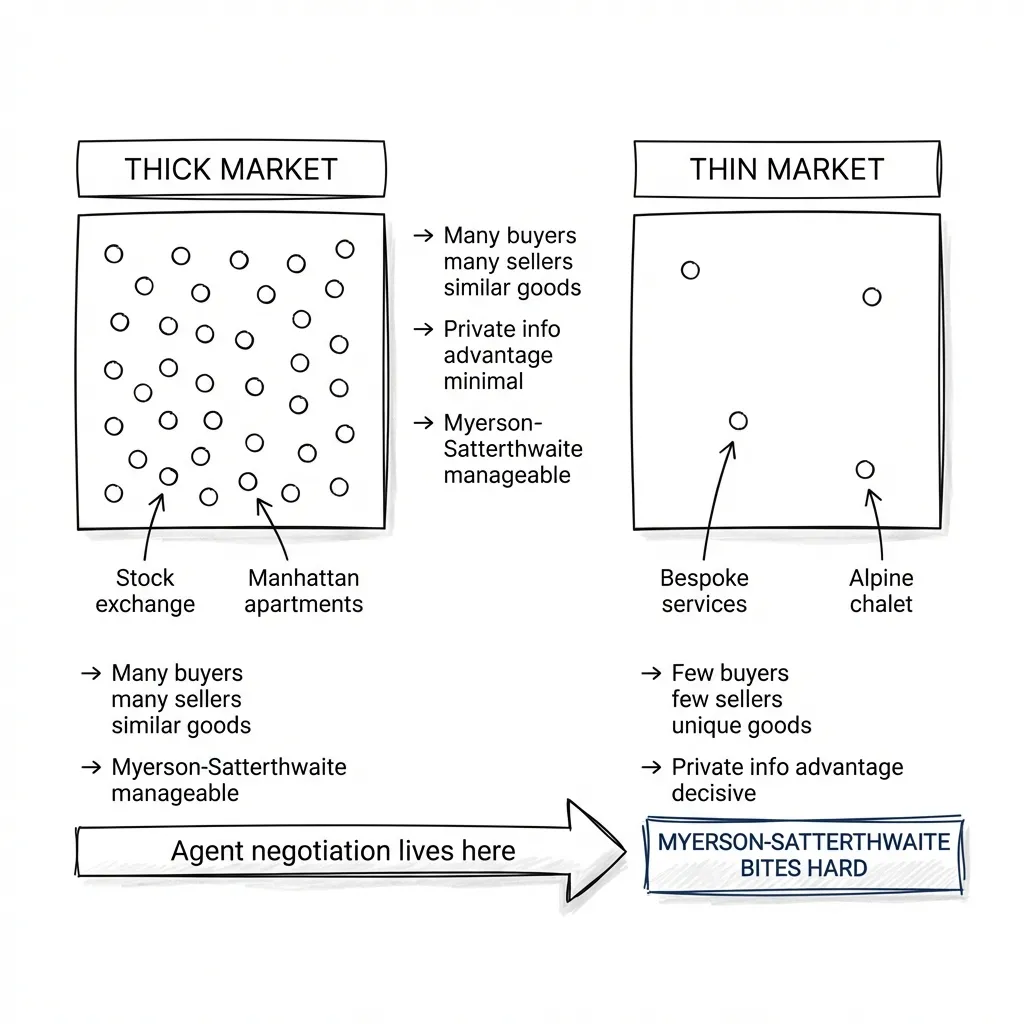

There’s one escape hatch: thick markets. When many buyers and many sellers compete for similar things, private information loses its edge. The stock market is the canonical example. Thousands of buyers and sellers for the same stock, continuous price discovery, near-zero information advantage for any single participant. Even there we get insider trading, but the sheer volume of participants makes the market roughly efficient despite individual bad actors.

Contrast that with thin markets, where the impossibility bites hard. Real estate: one seller, a handful of buyers, every property is unique. The seller’s walk-away price is private. The buyer’s budget ceiling is private. There’s no competitive pressure to force honest revelation. A Manhattan apartment has twenty comparable listings down the block, so information asymmetry is limited. A chalet in an Alpine village is one-of-a-kind, and whoever has better information about local pricing history wins.

Agent-to-agent negotiation lives in this thin-market territory. Bespoke deals, sole-source procurement, custom service contracts. The exact situations where Myerson-Satterthwaite bites hardest and agent capability asymmetry matters most.

You can’t compute fairness. You pick your unfairness. And that’s not an engineering decision.

The HFT Trajectory

My best guess.

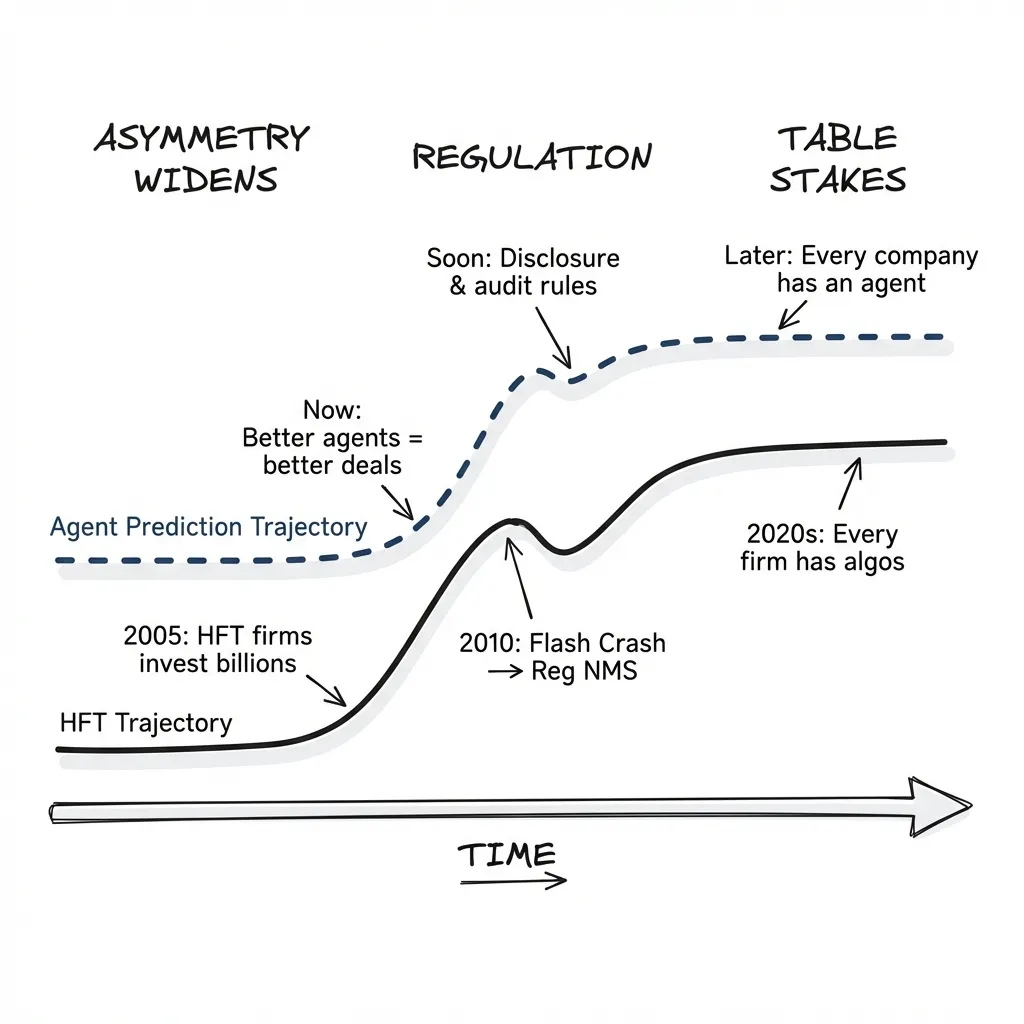

Near-term, asymmetry gets worse. Companies with resources deploy better agents. Companies without fall further behind. We watched this happen in financial markets: HFT firms invested billions in faster algorithms and co-located servers, Reg NMS tried to level the playing field, the 2010 Flash Crash showed what happens when the system outruns the governance. Fifteen years later we’re still arguing about it.

Agent negotiation will follow the same arc. The capability gap widens until something breaks visibly enough that regulators intervene. Disclosure requirements, probably — some version of “you must reveal that your counterparty is talking to a bot.” Audit trails for negotiation transcripts, which will be fascinating because nobody will want to read them. Constraints on tactics, though good luck defining “unfair” when the behavior is statistical.

Eventually agent capability becomes table stakes. Same way every company now has a website, every company will have a negotiation agent. The asymmetry flattens. But by then B2B relationships will have changed in ways we can’t undo, and humans will have become supervisors of agent interactions rather than participants.

I don’t know if this is good.

Deals get faster. Probably cheaper. But agents can’t make exceptions because they trust someone, or because the long-term relationship matters more than this quarter’s margin. They optimize for measurable outcomes. The things humans actually care about don’t fit in a loss function.

My Take

I don’t have answers to the fairness questions. I’m not sure anyone does yet.

But if you’re building systems where agents negotiate with agents, you need to think about what happens when one side’s agent is dramatically better. And who’s responsible when that asymmetry produces outcomes no human would have accepted. We’ve got frameworks for governing human negotiations: contract law, fiduciary duty, antitrust, professional ethics. For agent negotiations, we’ve got almost nothing. We can build the agents today. The rules for what they’re allowed to do won’t exist for years.

That gap is where things break. Not because the agents are malicious, but because nobody decided what “fair” means before they started negotiating.

Part 5 of “The Agentic User Experience” series. Previous: Agent Protocols.